Diffusion Processes

Diffusion models have gained momentum recently due to their remarkable performance in generating images, with similar advancements seen in video generation, as popularized by OpenAI. The core idea behind these models is that data distribution can be estimated using neural networks, enabling the generation of new images.

The training process involves learning the data distribution by injecting a well-known distribution, such as a Gaussian. As a result, inference can be performed by implementing a sampling process from the estimated distribution. This allows for the generation of new images starting from a completely random distribution.

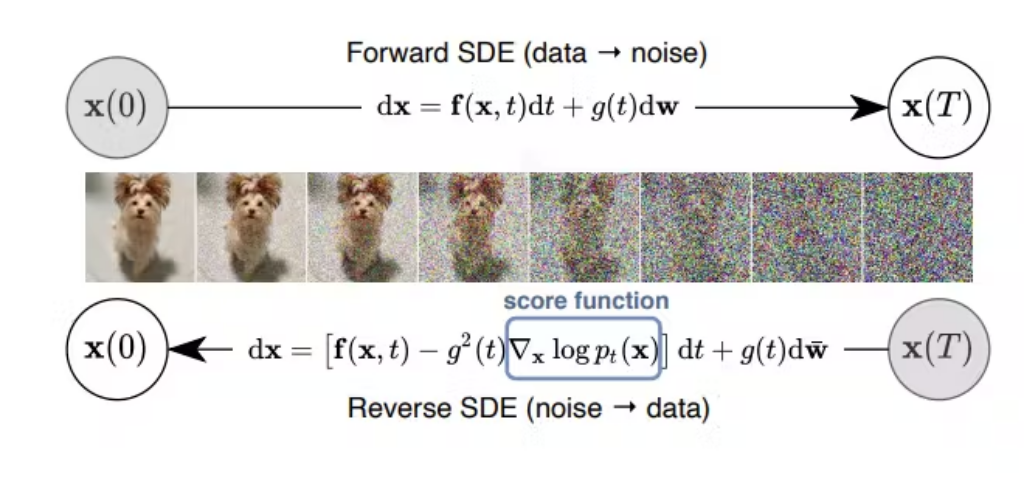

The foundation of diffusion models can be traced back to the theory of stochastic differential equations (SDEs), where the transformation from one distribution, such as image data, to a Gaussian random distribution can be described using Brownian motion [1-2].

The simple and well-explained blog can be read here and here

The figure is taken from [1]. The extension for diffusion model is also in [3-4]. The following video is a 3D knee image from MRI, could you guess which one is the original knee image and which one is the generated one?

References:

[1] Score-Based Generative Modeling Through Stochastic Differential Equations

[2] Denoising Diffusion Probabilistic Models

[3] Video Diffusion Model

[4] Align Your Latents High-Resolution Video Synthesis With Latent Diffusion Models